Table of Contents

Running large language models on your own computer used to be something only developers and data scientists attempted. Today, tools like KoboldCpp have made local AI surprisingly accessible, even for beginners. Whether you are interested in creative writing, roleplaying, experimenting with AI models, or simply exploring how local language models work, KoboldCpp offers a straightforward way to get started without needing advanced programming skills.

TL;DR: KoboldCpp is a lightweight application that lets you run powerful AI language models directly on your own computer. It is especially popular for storytelling, roleplay, and offline AI use. Setup is relatively simple compared to other AI tools, and it works with many popular model formats like GGUF. If you want privacy, control, and flexibility without relying on cloud services, KoboldCpp is an excellent starting point.

What Is KoboldCpp?

KoboldCpp is a standalone program designed to run large language models (LLMs) locally on your PC. It is a streamlined implementation of llama.cpp with added features specifically tailored for ease of use and storytelling tasks. In practical terms, it allows you to download an AI model file and interact with it through a browser interface — without needing an internet connection after setup.

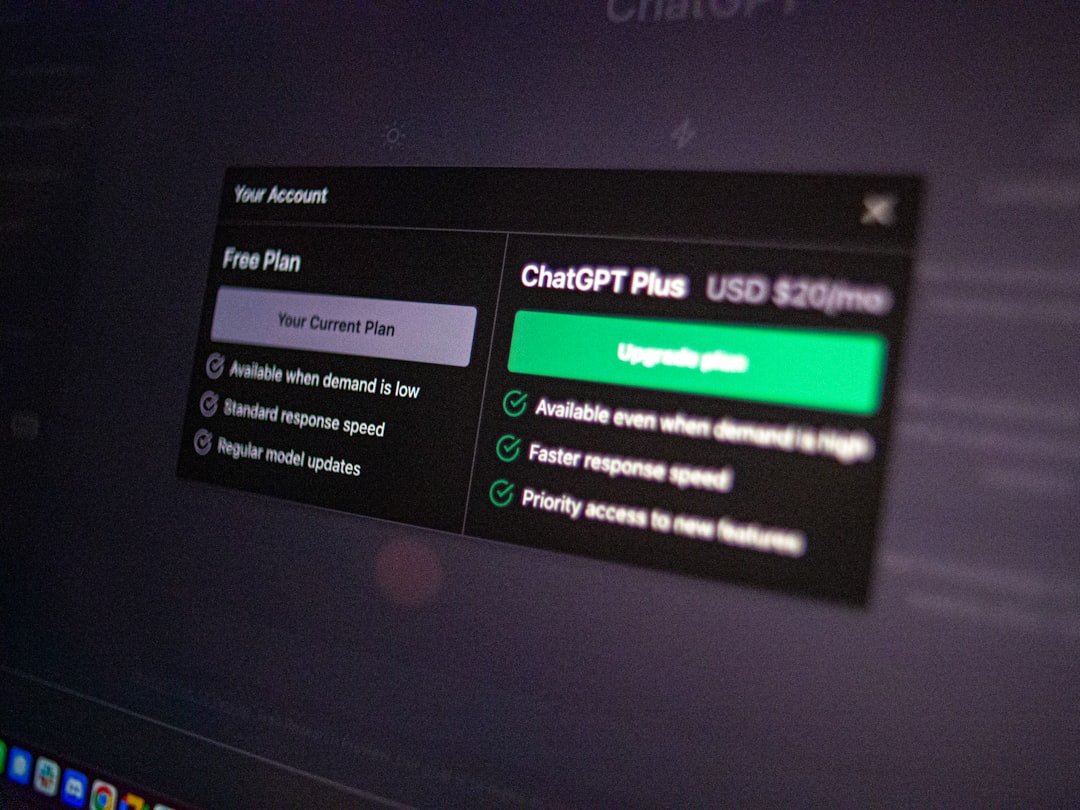

Unlike cloud-based AI platforms:

- Your data stays on your machine.

- No subscription is required.

- You can experiment freely without usage limits.

For many beginners, this local-first approach is both empowering and reassuring.

Why Use KoboldCpp Instead of Online AI?

There are many online AI services available today. So why bother installing something locally?

Here are several compelling reasons:

- Privacy: Your conversations never leave your computer.

- Offline Access: No internet connection required after setup.

- Customization: You can choose from many different models.

- No Censorship Constraints: You control the behavior of the AI model you install.

- Performance Control: Adjust settings to match your hardware.

For creative writers and hobbyists, this level of control makes KoboldCpp particularly appealing.

How KoboldCpp Works (In Simple Terms)

At its core, KoboldCpp loads a language model file into your computer’s memory and runs it using your CPU or GPU. The program acts as a bridge between the raw AI model and an easy-to-use browser interface.

Here’s a simplified breakdown of what happens:

- You download a compatible AI model file (usually in GGUF format).

- You launch KoboldCpp.

- You select the model file.

- KoboldCpp loads the model into RAM or VRAM.

- You interact with the AI through a web-based interface.

Everything runs locally — no cloud processing involved.

System Requirements: What Do You Need?

One of the best features of KoboldCpp is that it can run on modest hardware, depending on the model size you choose.

Minimum recommendations:

- 8 GB RAM (for very small models)

- A modern CPU

- Windows, Linux, or macOS

More comfortable setup:

- 16–32 GB RAM

- Dedicated GPU (NVIDIA preferred for acceleration)

- SSD storage for faster load times

The key idea is this: bigger models require more memory and processing power. Beginners are often better off starting with smaller 7B or 13B parameter models that are quantized (compressed) for efficiency.

Understanding Models and Quantization

When you use KoboldCpp, you are not downloading “Kobold AI” itself — you are downloading a language model created by researchers or enthusiasts.

Popular model sizes include:

- 7B parameters – Fast and lightweight.

- 13B parameters – Balanced performance and coherence.

- 30B+ parameters – Higher quality but demanding hardware.

Quantization reduces a model’s file size and memory requirements. You might see formats like:

- Q4

- Q5

- Q8

In simple terms:

- Lower numbers = less RAM usage but slightly reduced accuracy.

- Higher numbers = better quality but more memory needed.

For beginners, a 7B Q4 or Q5 GGUF model is often a safe choice.

Installing KoboldCpp: Step-by-Step Overview

Getting started is easier than many expect.

- Download KoboldCpp from its official source.

- Download a GGUF model that matches your hardware capabilities.

- Run the executable file (no complex installation required in most cases).

- Select your model through the interface.

- Click “Launch” and open the provided local web address.

Within minutes, you’ll have a functioning local AI chat interface.

Key Features Beginners Should Know

KoboldCpp includes several powerful features that make it stand out:

1. Story Mode

This is perfect for creative writers. The AI continues a story based on your prompts, maintaining tone and structure.

2. Chat Mode

Interact conversationally with the AI, similar to popular online chatbots.

3. Adjustable Settings

You can modify:

- Temperature (creativity level)

- Top-k and Top-p sampling

- Prompt formatting

- Context length

These settings directly affect how creative, random, or focused the generated responses will be.

4. GPU Acceleration

If you have a compatible GPU, KoboldCpp can dramatically improve response speed by offloading calculations from the CPU.

Common Use Cases

While KoboldCpp can technically handle many text-based tasks, it is especially popular in certain communities.

- Creative writing assistance

- Roleplaying games

- Interactive fiction

- World-building

- Private AI experimentation

Writers often use it to brainstorm plot twists, generate dialogue, or expand on rough story outlines.

Tips for Better Results

Getting good output from a local language model requires some fine-tuning.

Write Clear Prompts

The AI works best when given specific instructions. Instead of writing “Tell me a story,” try:

“Write a suspenseful short story about a detective stranded in a snowstorm, written in first-person perspective.”

Adjust Temperature Gradually

- Lower temperature (0.5–0.7) = more predictable responses.

- Higher temperature (0.8–1.2) = more creative but less stable results.

Use Context Wisely

Longer context windows allow the AI to remember more of the conversation. However, they also use more RAM.

Advantages and Limitations

No software is perfect, and KoboldCpp is no exception.

Advantages

- Free and open-source

- Private and offline

- Flexible model support

- Quick setup

- Strong storytelling tools

Limitations

- Quality depends on your hardware

- Large models require significant RAM

- Setup can still feel technical to absolute beginners

- No built-in updates to models — you manage them yourself

Is KoboldCpp Safe?

Because KoboldCpp runs locally and does not send your data to external servers, it is generally considered very safe from a privacy standpoint. However, you should:

- Download models from reputable sources.

- Keep your system updated.

- Verify files when possible.

As with any downloaded software, basic cybersecurity practices apply.

Who Should Try KoboldCpp?

KoboldCpp is ideal for:

- Writers who want private AI assistance

- AI enthusiasts experimenting with local models

- Gamers interested in AI-driven storytelling

- Privacy-focused users avoiding cloud services

If you are simply looking for instant, polished, high-end AI with no setup, cloud tools may be easier. But if you value control, customization, and learning how AI works under the hood, KoboldCpp offers a rewarding experience.

Final Thoughts

KoboldCpp represents an important shift in how people interact with artificial intelligence. Instead of relying entirely on powerful corporate servers, everyday users can now run impressive language models directly from their own machines. While there is a small learning curve, the freedom and flexibility it provides make it worth exploring.

For beginners curious about local AI, KoboldCpp is one of the most approachable entry points available today. With the right model and realistic expectations based on your hardware, you can build a personal AI workspace that works entirely on your terms.

And once you experience the freedom of running AI locally, it becomes clear: this is not just a technical tool — it is a glimpse into the decentralized future of artificial intelligence.