Table of Contents

As artificial intelligence becomes central to modern software products, businesses are searching for ways to make AI systems more accurate, reliable, and context-aware. One of the most promising approaches is Retrieval-Augmented Generation (RAG)—a technique that enhances large language models by connecting them to external knowledge sources. Retrieval-Augmented Generation software enables developers to build smarter AI applications that deliver fact-based, up-to-date, and domain-specific responses instead of relying solely on pre-trained model knowledge.

TLDR: Retrieval-Augmented Generation (RAG) software improves AI applications by combining large language models with real-time information retrieval from external data sources. This approach increases accuracy, reduces hallucinations, and allows organizations to use their own documents as trusted knowledge bases. RAG tools simplify data indexing, vector search, and orchestration, making it easier to build reliable AI assistants. Businesses use RAG to create chatbots, search engines, knowledge assistants, and enterprise tools that deliver context-aware answers.

What Is Retrieval-Augmented Generation?

Retrieval-Augmented Generation is an AI architecture that combines two key components:

- Retriever: Searches external data sources (documents, databases, APIs, file systems).

- Generator: A large language model (LLM) that produces natural language responses using the retrieved information.

Instead of generating answers purely from its training data, the model first retrieves relevant information from a custom knowledge base. That information is then injected into the prompt, allowing the model to generate responses grounded in factual and domain-specific content.

This architecture significantly improves factual correctness and transparency while enabling AI systems to work with private company data.

Why RAG Matters for Smarter AI Apps

Traditional language models have limitations:

- They may produce outdated information.

- They can hallucinate incorrect facts.

- They lack access to proprietary data.

- They cannot easily cite verifiable sources.

RAG overcomes these challenges by grounding responses in real documents. This is especially critical for industries such as:

- Healthcare

- Finance

- Legal services

- E-commerce

- Enterprise IT support

By implementing RAG software, organizations reduce risk while increasing answer reliability and contextual depth.

How Retrieval-Augmented Generation Software Works

Most RAG platforms follow a similar workflow:

- Data ingestion: Upload and parse documents such as PDFs, spreadsheets, websites, or structured databases.

- Chunking: Break large documents into smaller, searchable segments.

- Embedding: Convert text chunks into numerical vector representations.

- Indexing: Store vectors in a vector database for fast similarity search.

- Query processing: Transform user input into embedding vectors.

- Retrieval: Identify the most relevant stored chunks.

- Generation: Provide context to the language model for a grounded response.

This modular architecture allows companies to continuously update their data sources without retraining the entire language model.

Key Benefits of Retrieval-Augmented Generation Software

1. Improved Accuracy

Responses are based on real, stored documents rather than guesses generated from pretraining.

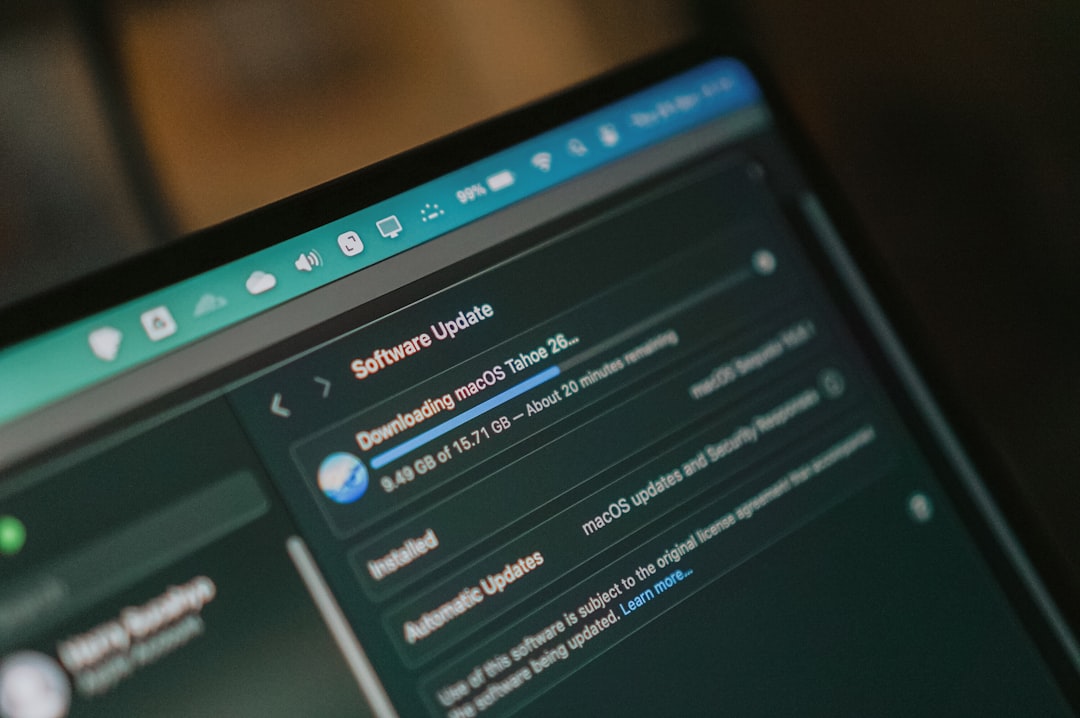

2. Real-Time Knowledge Updates

Companies can update their databases without retraining the LLM.

3. Reduced Hallucinations

Grounding responses drastically lowers fabricated information.

4. Enterprise Knowledge Integration

Internal documents, policies, wikis, and product manuals become searchable through natural language.

5. Source Attribution

Many RAG tools provide citations, increasing user trust.

Popular Retrieval-Augmented Generation Tools

Several platforms and frameworks simplify RAG implementation:

1. LangChain

An open-source framework that helps developers orchestrate retrieval pipelines and connect LLMs to external data sources.

2. LlamaIndex

Designed specifically for data ingestion and indexing, LlamaIndex simplifies document-to-vector workflows.

3. Pinecone

A managed vector database optimized for large-scale similarity search.

4. Weaviate

An open-source vector database supporting hybrid search and filtering.

5. Azure AI Search

An enterprise-ready search solution that integrates with language models for RAG use cases.

Comparison Chart of Popular RAG Tools

| Tool | Primary Function | Best For | Hosting | Open Source |

|---|---|---|---|---|

| LangChain | LLM orchestration framework | Custom AI pipelines | Self-hosted | Yes |

| LlamaIndex | Data indexing pipeline | Document-heavy RAG systems | Self-hosted | Yes |

| Pinecone | Vector database | Scalable semantic search | Cloud managed | No |

| Weaviate | Vector database | Hybrid search applications | Cloud or self-hosted | Yes |

| Azure AI Search | Enterprise search integration | Corporate environments | Cloud managed | No |

Use Cases for RAG in AI Applications

Retrieval-Augmented Generation software powers a wide variety of intelligent systems:

Enterprise Knowledge Assistants

Employees can ask natural language questions about HR policies, onboarding documentation, or internal technical procedures.

Customer Support Automation

Chatbots retrieve answers directly from product documentation, reducing incorrect responses.

Legal and Compliance Research

Professionals can query regulatory texts and case law with citations included in the output.

E-commerce Search

Product recommendations become more contextual and aligned with real inventory data.

Healthcare Knowledge Systems

Medical professionals can query updated clinical documents safely within authorized environments.

Challenges When Implementing RAG

Despite its advantages, implementing RAG software comes with technical considerations:

- Data quality: Inaccurate or outdated documents lead to flawed outputs.

- Chunking strategy: Poor chunk sizes affect retrieval relevance.

- Latency: Retrieval plus generation can increase response time.

- Security: Sensitive documents must be properly protected.

- Evaluation complexity: Measuring retrieval accuracy is not always straightforward.

Careful system design and monitoring are essential to maximize benefits.

Best Practices for Building Smarter AI Apps with RAG

Developers can improve effectiveness by following structured best practices:

- Invest in clean, structured data.

- Experiment with different embedding models.

- Use hybrid search (keyword + vector search).

- Implement feedback loops for continuous improvement.

- Provide citations in responses.

Additionally, incorporating evaluation benchmarks helps maintain long-term performance quality.

The Future of Retrieval-Augmented Generation Software

As AI infrastructure matures, RAG systems are evolving to include:

- Multimodal retrieval (text, images, audio, video)

- Real-time API integrations

- Autonomous agents with retrieval capabilities

- Improved reasoning over structured databases

Future advancements will likely blend RAG with fine-tuning, reinforcement learning, and agentic workflows. These hybrid architectures will create AI systems capable of complex, trustworthy decision-making.

Conclusion

Retrieval-Augmented Generation software represents a major leap forward in building smarter AI applications. By combining real-time retrieval with powerful language generation, organizations gain greater accuracy, transparency, and control. From customer service bots to enterprise research assistants, RAG enables AI systems to be grounded in truth rather than probability alone. As adoption grows, businesses that implement well-architected RAG pipelines will gain a competitive advantage in delivering intelligent, trustworthy AI experiences.

Frequently Asked Questions (FAQ)

1. What is the main purpose of Retrieval-Augmented Generation software?

The main purpose is to improve AI accuracy by retrieving relevant information from external data sources before generating a response.

2. How does RAG reduce hallucinations?

By grounding responses in retrieved documents, the model relies on verified data rather than guessing from its training set.

3. Do companies need to retrain models when using RAG?

No. Most RAG systems allow knowledge updates without retraining the core language model.

4. Is RAG suitable for small businesses?

Yes. Many open-source frameworks make implementation accessible for startups and smaller organizations.

5. What industries benefit the most from RAG?

Industries dealing with complex, document-heavy workflows—such as healthcare, legal, finance, and enterprise IT—benefit significantly.

6. What is required to build a RAG-based app?

Developers typically need a language model API, an embedding model, a vector database, a data ingestion pipeline, and orchestration software to connect components.

7. Can RAG work with private or secure data?

Yes, provided proper security measures, encryption, and access controls are implemented.